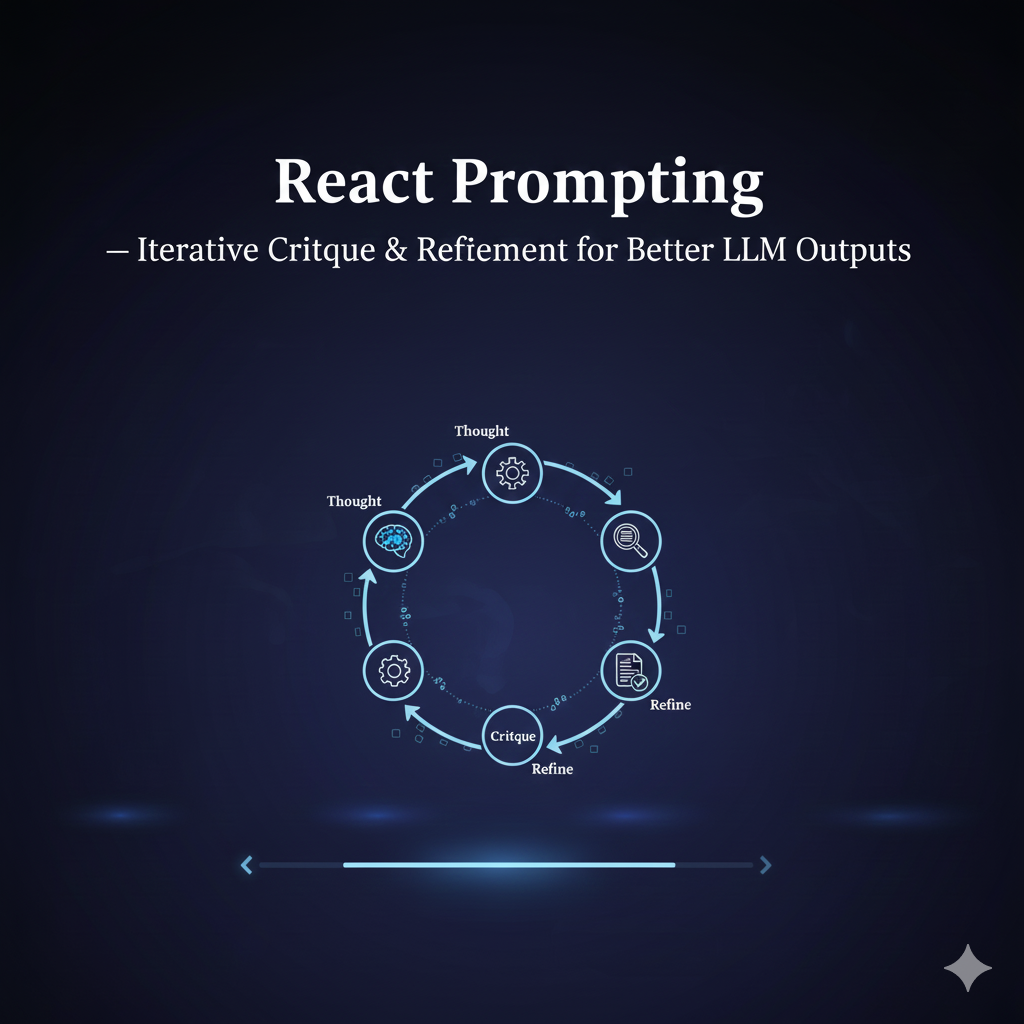

React Prompting | Iterative Feedback Loops for Better LLM Outputs

🚀 ReAct (Reason + Act) Prompting — A Developer’s Guide

For ML engineers building reliable, interpretable, tool-augmented LLM agents.

TL;DR

ReAct is a prompting paradigm that weaves together reasoning (“Thought: …”) and tool-action (“Action: …”) in the same model trajectory. This lets your LLM both explain its thinking and interact with external tools or APIs, improving factual accuracy, traceability, and control. 🔍🤖 Based on the original ReAct paper by Yao et al.

Editor's Note: You can safely test the techniques in this guide using the PROMPT_ENGINE AI Prompt Generator. It is fully client-side and secure—your prompts never leave your browser and are never stored or used for AI training.

1. What Is ReAct? 🤔 + 🔧

- Reason: The model explicitly produces internal thought traces (“Thought: …”) to reflect its chain of reasoning.

- Act: The model generates action tokens (“Action: Search[…], CallAPI[…]”) to call external tools.

- These alternate: the LLM thinks, then acts, then ingests the result as an observation, then thinks again — until it arrives at a Final Answer.

- This design makes the reasoning process transparent, and the actions deterministic and controllable.

2. Why Developers Should Use ReAct ✅

-

Improved Factuality & Grounding

- By interleaving searches or API calls, your model can fetch real information instead of hallucinating.

- The reasoning is grounded in fresh evidence, which improves correctness.

-

Interpretability & Debugging

- Every action comes with a “Thought” justification, so you can trace why the model is doing what it’s doing.

- Useful for debugging, audits, or adding human-in-the-loop checks.

-

Simpler Agent Architecture

- Rather than separate planning and executing modules, a single LLM loop handles both.

- Less orchestration glue needed — just a simple controller + tool adapters.

-

Robustness in Interactive Tasks

- Whether answering questions, calling APIs, or interacting with simulators — ReAct naturally handles multi-step, interactive workflows.

- Works well in QA, web-agent tasks, or simulated environments.

3. When & Where to Use ReAct vs Other Paradigms 💡

| Use-case | Recommended Approach |

|---|---|

| Pure internal reasoning, no external tools | Chain-of-Thought (CoT) |

| Exploring alternative solution paths but not calling tools | Tree-of-Thought (ToT) |

| Reasoning + tool usage required (search, APIs, simulations) | ReAct |

| Complex tool orchestration, stateful agents, long-running sessions | Agent frameworks (LangChain, custom systems) — often built on ReAct-like patterns |

4. Key Components (Developer’s Perspective) 🛠️

-

Prompt Template

- Instructs the model to alternate between

Thought:andAction:lines, and to wrap tool calls in a simple grammar.

- Instructs the model to alternate between

-

Tool Adapters / Wrappers

- Deterministic code that interprets

Action:tokens and calls the real tools (search, API, DB, simulator). - Converts results into observation strings.

- Deterministic code that interprets

-

Controller / Orchestrator

- Runs a loop: feed LLM, parse actions, execute them, feed back observations.

- Maintains state, enforces maximum actions, and ends when “Final Answer:” is found.

-

Verifier / Validator

- After generating a final answer, optionally run a verifier module (LLM or deterministic) to score correctness.

- Useful to detect low-confidence or incorrect outputs.

-

Logging / Lineage

- Record each

Thought / Action / Observationtriplet, along with model version and tokens. - Crucial for audit, replay, and debugging in production.

- Record each

5. Prompt Templates (Text-Only, Easy to Copy) 📄

Reason + Act pattern

SYSTEM: You are an intelligent assistant that solves tasks by thinking and acting.

When you think, start with "Thought:".

When you want to perform a tool operation, write "Action: <ToolName>[<args>]".

After each Action, I will run it and return an "Observation:" line.

Continue this until you produce a "Final Answer:".

USER: Task: <your task description here>

Verifier / Scoring template

SYSTEM: You are a verifier. Given the task, the candidate answer, and the interaction trace (thoughts, actions, observations), provide:

1) Confidence score (0.0–1.0)

2) Short explanation

3) List of failing checks (if any)

USER:

Task: <task description>

Candidate: <candidate answer>

Trace: <full log of Thought / Action / Observation>

Return JSON: {"score": 0.90, "reasons": "...", "failures": ["..."]}

6. Concrete Scenarios & Examples 💼

Scenario A – QA with Retrieval

-

Task: Answer a multi-hop question using web search.

-

Flow:

- Thought: “I should search for background …”

- Action:

Search["Key concept"] - Observation: snippet from web

- Thought: “This snippet says X, but I need more detail …”

- Action:

Search["More detailed query"]→ … - Final Answer: a grounded summary + citations

Benefit: Less hallucination, more traceable reasoning.

Scenario B – API-Driven Assistant

-

Task: “Schedule a meeting, check free slots, send invites.”

-

Flow:

- Thought: “Let me check the calendar”

- Action:

CallAPI[Calendar.list, user_id] - Observation: JSON list of free slots

- Thought: “I found slots; pick one that works”

- Action:

CallAPI[Calendar.create, slot, invitees] - Observation: “Meeting created”

- Final Answer: “Meeting scheduled successfully for …”

Benefit: Safe, auditable external calls + rationale for every step.

Scenario C – Simulated / Interactive Environments

-

Task: Agent navigating a simulated environment (game, robot, WebShop, etc.).

-

Flow:

- Thought: “What objects are around me?”

- Action:

Action: Look[] - Observation: description of surroundings

- Thought: “There’s a key here; I can pick it up.”

- Action:

Action: Pick["key"] - … repeat, then conclude with a final plan or result.

Benefit: The agent doesn’t just “think”; it acts, checks, and rethinks — making it more robust.

7. Engineering Trade-offs & Best Practices 🧠

-

Prompt design: Use explicit instructions + a small grammar for actions. Include a few in-context examples.

-

Action scoping: Design actions narrowly — e.g.,

Search,CallAPI,ReadDoc— don’t let the model run arbitrary code without checks. -

Cost management:

- Use cheap tools (local DB, cache) before expensive ones (web search).

- Limit number of actions / total budget per request.

-

Safety & validation:

- Validate action arguments on your service side.

- Prevent dangerous or high-impact actions from being generated without verification.

-

Scalability:

- Separate LLM worker pool and tool-execution pool.

- Use queues for handling requests and enforce limits.

- Cache observations for repeated identical action calls.

8. MLOps & Productionization Checklist ✅

As an ML Engineer, here are key things to track when deploying a ReAct-based system:

-

Lineage & Audit Logging

- Store full traces: thoughts, actions, observations, final answers

- Persist model version, prompt template version, and token usage

-

Monitoring Metrics

- Track tool usage frequency (which actions are used most)

- Monitor action failure rates (API errors, invalid actions)

- Observe verifier confidence distributions

-

Retraining / Tuning

- Collect “good vs bad” traces (e.g., human-verified)

- Train or fine-tune a reranker / verifier model to pick better final answers

- Periodically refresh verifier or ranking logic

-

Security & Access Control

- Enforce least-privilege access to tool adapters / APIs

- Sanitize inputs/outputs to avoid injections or PII leaks

- Maintain role-based access to action-execution logs

-

Fallback / Human in Loop

- If verifier confidence is low or actions are risky, route trace for human review

- Provide a way to intervene, correct, or restart the chain

9. Common Pitfalls & How to Avoid Them ⚠️

- Infinite loops / runaway actions: enforce a maximum action count; penalize reuse of same actions.

- Invalid or malicious actions: validate action syntax and parameters in your orchestrator.

- Model hallucinating actions: restrict grammar, don’t allow arbitrary tool names or arguments.

- Over-reliance on LLM self-evaluation: always pair with deterministic checks (verifiers, unit tests).

- High cost from too many calls: batch, cache, prune aggressively.

10. Further Reading & Resources 📚

- Original Paper: ReAct: Synergizing Reasoning and Acting in Language Models — Yao et al.

- Google Research Blog: How ReAct blends reasoning and tool use in LLMs.

- Open-source / Community: Agent frameworks like LangChain support ReAct-style patterns.

✨ Bottom line for ML Engineers: ReAct is a powerful, interpretable, and production-friendly paradigm to build LLM-based agents. It aligns well with ML ops needs (traceability, auditing, safety) and integrates cleanly with tool systems. If you're building agents that need both thinking and acting, ReAct is a go-to pattern.

🚀 Professional Prompt Engineering with PROMPT_ENGINE

Stop manually tweaking your prompts for every different model. Use the PROMPT_ENGINE AI Prompt Generator to apply standardized techniques instantly across GPT-4o, Claude 3.5, and Gemini Pro.

🛡️ Secure, Private & Local-First

- 100% Client-Side: No data is sent to our servers. All processing happens locally in your browser.

- Privacy-First: Your proprietary prompts are never stored, logged, or used for model training.

- Zero Latency: No account required. Just a fast, secure environment for your AI workflow.

Supported Frameworks & Techniques:

The PROMPT_ENGINE library includes a massive range of standardized templates, including:

- Chain-of-Thought (CoT): Force models to think step-by-step for complex reasoning.

- Few-Shot & Multi-Shot: Align tone and output using your own local examples.

- ReAct & Self-Ask: Structured templates for agentic workflows and tool-use.

- Persona & Role-Play: Calibrate model expertise for specialized professional tasks.

- Structured I/O: Standardized JSON, Markdown, and XML formatting for developers.

- And many more... including Meta-Prompting and Automatic Reasoning frameworks.

Access the PROMPT_ENGINE Prompt Library →

Free for the community • Industry Standard • 100% Secure